News Story

Carol Espy-Wilson is PI for multi-site NSF speech recognition grant

Professor Carol Espy-Wilson (ECE/ISR) is the principal investigator for a two-year, $600,000 National Science Foundation Collaborative Research award, “Multilingual Gestural Models For Robust Language-Independent Speech Recognition.”This multi-site grant includes researchers at the Stanford Research Institute (SRI), Boston University and Haskins Laboratories. Espy-Wilson’s former student Vikramjit Mitra (EE Ph.D. 2011) is the principal investigator on the portion of the grant going to SRI.

The researchers will develop a large-vocabulary speech recognition system based on articulatory information.

Current state-of-the-art automatic speech recognition (ASR) systems typically model speech as a string of acoustically-defined phones and use contextualized phone units, such as tri-phones or quin-phones to model contextual influences due to coarticulation. Such acoustic models may suffer from data sparsity and may fail to capture coarticulation appropriately because the span of a tri- or quin-phone's contextual influence is not flexible. In a small vocabulary context, however, research has shown that ASR systems which estimate articulatory gestures from the acoustics and incorporate these gestures in the ASR process can better model coarticulation and are more robust to noise.

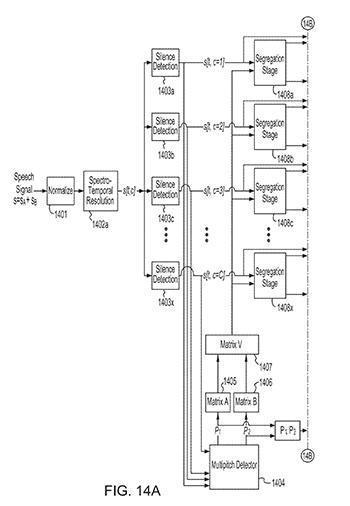

The researchers will investigate the use of estimated articulatory gestures in large vocabulary automatic speech recognition. Gestural representations of the speech signal are initially created from the acoustic waveform using the Task Dynamic model of speech production. These data are then used to train automatic models for articulatory gesture recognition where the articulatory gestures serve as subword units in the gesture-based ASR system. The research will evaluate the performance of a large-vocabulary gesture-based ASR system using American English (AE). This system will be compared to a set of competitive state-of-the-art recognition systems in term of word and phone recognition accuracies, both under clean and noisy acoustic background conditions.

The broad impact of this research is threefold: (1) the creation of a large vocabulary AE speech database containing acoustic waveforms and their articulatory representations, (2) the introduction of novel machine learning techniques to model articulatory representations from acoustic waveforms, and (3) the development of a large vocabulary ASR system that uses articulatory representation as subword units.

The robust and accurate ASR system for AE will deal effectively with speech variability, thereby significantly enhancing communication and collaboration between people and machines in AE, and with the promise to generalize the method to multiple languages. The knowledge gained and the systems developed will contribute to the broad application of articulatory features in speech processing, and will have the potential to transform the fields of ASR, speech-mediated person-machine interaction, and automatic translation among languages.

This work will result in a set of databases and tools that will be disseminated to serve the research and education community at large.

Espy-Wilson is a University of Maryland 2012 Distinguished Scholar-Teacher. She will give a lecture to the university community, “Say What? Production, Perception and Variability of Speech” on Dec. 7.

Published September 28, 2012